Windows Server Failover Clustering: Why Cluster Quorum Matters

There are many people, some new to clustering and many who have used the feature for years, who don’t understand the purpose of quorum in Windows clustering. The common misconception is that quorum is used to help failover happen. That’s close but not correct. Quorum exists to help a cluster understand how it should remain functioning when the heartbeats between nodes fail, probably because of a networking issue. In this article I’ll explain the function of cluster quorum in Window Server failover clustering.

Function of Quorum

Let’s go back to basics. Every node sends a unicast heartbeat to every other node in the cluster. This heartbeat is checking for cluster node responsiveness. If a node fails to respond then it is considered offline. If a network fails, then this can cause a false-positive. That’s why we recommend having a minimum of two cluster networks. A clustering virtual adapter called NetFT automatically teams those networks transparently (you really have to go looking for it to know that it’s there) and provides the heartbeat with network path fault tolerance. But what happens if both those links go offline?

Explaining Quorum with Examples

The definition of “quorum” according to Oxford Dictionaries is “the minimum number of members of an assembly or society that must be present at any of its meetings to make the proceedings of that meeting valid.” Keep that in mind as we look at some cluster quorum examples.

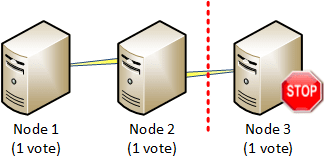

In my first example, we have three nodes and the networking fails, isolating Node 3 from Nodes 1 and 2. Nodes 1 and 2 can see each other, but they cannot see Node 3, and Node 3 cannot see Nodes 1 and 2. When a node fails like this, the cluster will attempt to achieve quorum. It needs to decide two things: which side of the failure should remain operational and thus where to failover the HA resources.

This avoids highly available (HA) resources from attempting to be active on both sides of the failure. This is of huge importance when you implement a multisite cluster where HA resources (such as virtual machines) could come online in the primary and secondary sites at the same time and lead to later service and data corruption when the links come back online.

In the simple example below, each node has one vote. Both sides of the failure will cast votes to see who should remain operational. The cluster 3 nodes, and a total of three votes (one per node). Nodes 1 and 2 add up to two votes. They have more than half of the remaining operational cluster, achieving quorum, so they will continue to function and attempt to bring online the HA resources that were on Node 3. Node 3 has just one vote, which is less than half of the cluster, so Node 3 will temporarily cease being an active member of the cluster and not attempt to participate in running or failing over HA resources.

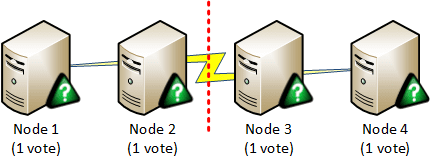

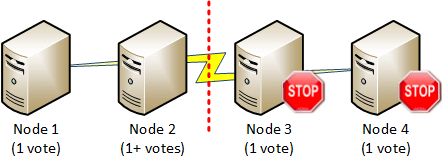

That was a simple example. But what happens if there are an even number of nodes and the network break splits the cluster into two even halves? That’s what we have in the below example. Nodes 1 and 2 have a sum of two votes. That is not more than half of the total votes so they have not achieved quorum. Nodes 3 and 4 also have just two votes and also have not achieved quorum. So we end up with a malfunctioning cluster.

We need to break the vote somehow. That could be done by giving the voting members some way to perform a tiebreak – maybe by giving a member a “super vote” or by disabling one member’s vote.

Windows Failover Clustering Quorum

Windows Server failover clustering has mechanisms for achieving cluster quorum after a failure. These mechanism have evolved over the years from requiring lots of care and administration to becoming a much simpler system in Windows Server 2012 that can tolerate unusual host failure scenarios. In a future post, I will discuss the possible configurations and which options to choose.