How Windows Server Containers Work

In “What are Windows Server Containers?,” I discussed why many businesses will embrace Windows Server Containers with the release of Windows Server 2016. In this post, I will explain how Windows Server Containers work, as the feature is outlined in the Windows Server 2016 Technical Preview 3 (TPv3).

Windows Server Container Goals

The objective of Windows Server Containers is not to provide another way to deploy legacy applications. If you have traditional applications that have their own machine, local persistent storage, and is authenticated using Active Directory, then you should use machine virtualization technologies such as on-premises Hyper-V or vSphere, or public clouds, such as Azure or AWS.

Containers give us the ability to deploy a service from a reusable template in seconds. No matter how many times you deploy from that template, you will get the same result. If you’re working in software development or DevOps, then this should be music to your ears.

Windows Server Containers Terminology

There are a number of terms to understand with Windows Server Containers:

- Host: A host is machine that hosts containers. The machine can be physical or virtual. The virtual host is referred to as a VM host.

- Repository: This is a flat-file store of reusable images. In TPv3, the repository is kept on the host, but later releases might allow the repository to be a shared between hosts.

- Container OS Image: When you deploy a container based on Windows, then it will be based on an OS image of Windows Server 2016 core installation.

- Container Image: Container images allow you to capture deployment of changes to a container. When you capture an image, you can reuse it to recreate the source container repeatedly.

- Hyper-V Container: A later technical preview of Windows Server 2016 will add Hyper-V containers to the mix. Hyper-V is used to securely isolate containers.

Building Containers from the Repository

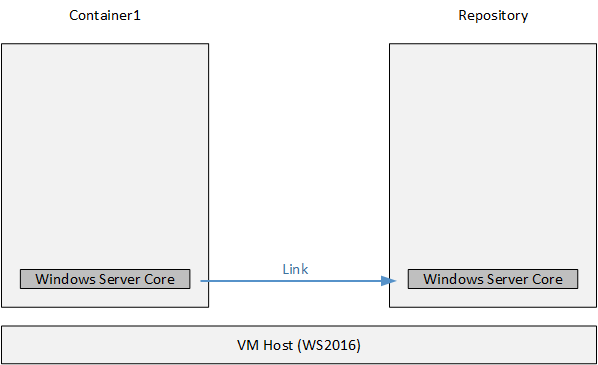

You can understand the security and viability of Windows Server Containers a bit more once you understand how the repository is used. The repository is a library and everything is not just built from that library, but it is linked to that library. Let’s look at how the process works in TPv3.

Building a container starts with creating a new container using an image from the repository as a source. In Windows Server Containers, you will start with an image called WindowServerCore. This process does not copy the image from the repository to the container; instead, the new container links to the image in the repository. It is this linking that makes containers so fast at deploying services.

You can start the container and start to make changes. Anything you do make are stored persistently with the container — containers are not stateless, but they are not the same as virtual machines, either.

A container isn’t a machine so you cannot log into it; however, you can use Invoke-Command to run commands inside a container or Enter-PSSession to create an interactive PowerShell session to a container. This lets you install software in the container, such as runtimes or applications. Note that you must be able to install your software using command line, PowerShell, or via an unattended installation.

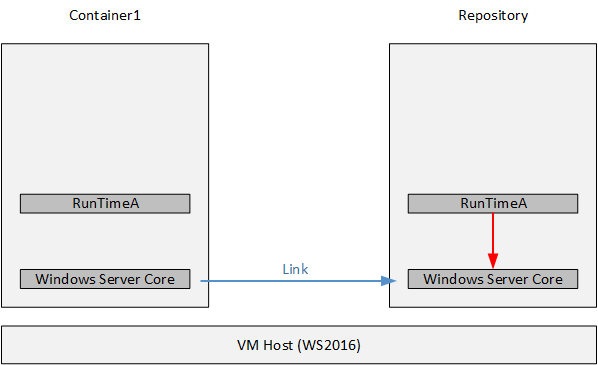

Let’s imagine that we’ve installed a runtime called RuntimeA into our new container. We can stop the container and then create a new container image in the repository. The creation process captures the changes to the container and stores those changes as a container image. The new container image has a parent child link to the image that was used to create the original container. For example, if we created a container from the WindowsServerCore image, installed RuntimeA, and then created a container image from the results, a new container image is created with the installation of RuntimeA, and that new container image links to WindowsServerCore.

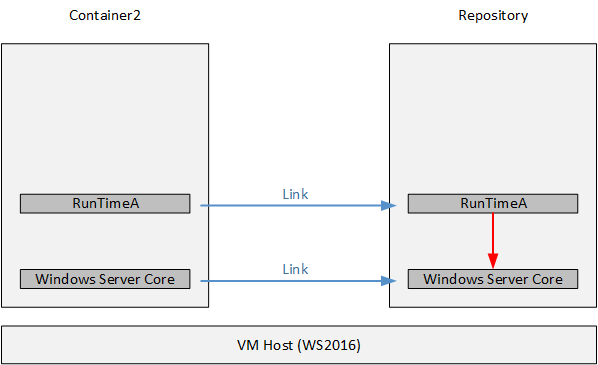

Let’s put aside that first container for a moment and create a second container. Imagine this scenario: I am a developer, and I want a container with RunTimeA. I select RunTimeA as the source image. Because there is a dependency on WindowServerCore, both the child and the parent images are used to create the container. And once again, no images are moved or copied. Instead, a link is created from the second container to the original images in the repository, and all changes are stored in the container.

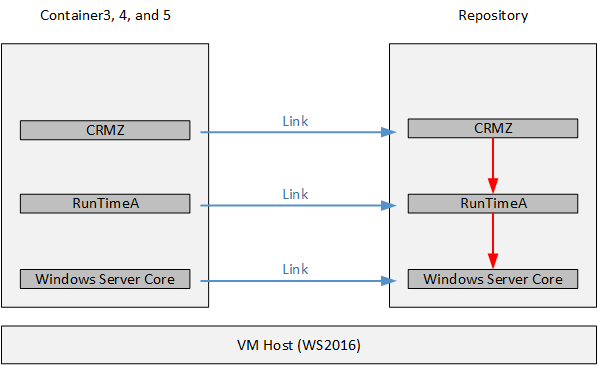

That developer wants to be able to develop, test, and deploy a new application called CRMZ. The dev connects to the second container and deploys CRMZ. They shut down the container and create a new container image, which stores the changes caused by the software installation. The new container image, CRMZ, has a child-parent relationship with RunTimeA, which in turn has a child-parent relationship with WindowsServerCore.

Now the developer can create three containers, one for development, one for testing, and one for production, using the CRMZ container image as the source, and all of the dependencies will be in place. Once again, no images are deployed or copied; links are used. This ensures that each container is identical and the container creation process is fast.

A business could quickly build up a library of images with reduced installation efforts. If someone else is deploying WebServerX that also requires RunTimeA, then they don’t need to install RunTimeA. They can simply create a container from the RunTimeA container image, deploy WebServerX, and capture it. Now anyone can deploy WebServerX, and in turn, create new container images from that.

What you’ve seen so far is:

- Quick: By using links, container creation is very fast.

- Reduced storage: You don’t need an entire OS and set of memory, as you would for each virtual machine. Only differences are stored in a container, and images use child-parent relationships to reduce their size.

- Repeatable deployments: If you deploy a container image one thousand times, there will be one thousand identical containers.

- Reduced effort: How many times is PHP and Java deployed in a single business? Containers will reduce the human effort and standardize deployments.

Remember:

- Containers are designed for born-in-the-cloud services. Don’t even imagine that you’ll install a domain controller or an Exchange server into a container!

- Containers are supposed to be, to quote an old 1990 rock song, easy come, easy go, bare hairy chested leather jacket optional. If your service stores data, then store it outside of the container. You need to be able to afford to lose a container.

- There is no high availability with containers. A VM host can run on a Hyper-V cluster, but you cannot cluster a container — see ‘born in the cloud.’

- Active Directory membership is not supported in containers. You’ll need to seek out other cloud friendly forms of security and policy.

This is why Windows Server Container production deployments are going to be a rare sight for most of us, but for those with suitable services, this new deployment option will be a serious game changer.