Windows Server 2012 or WS2012 R2 Hyper-V Cluster Requirements

If you’ve been following along, you may recall a previous article in which I explained the role that Windows Server failover clusters play in making Hyper-V hosts highly available. In today’s article, I will explain what the requirements are for building a Windows Server 2012 /R2 Hyper-V cluster.

Build a Windows Server 2012 / R2 Hyper-V Cluster: Requirements

Host Servers

You will require two or more servers to function as Hyper-V hosts. Actually, that’s not strictly true. You can build a new cluster with just one server (node). That allows you to build a cluster when you are temporarily short on hardware, albeit without actually having virtual machine high availability (HA) until you add a second host.

Ideally you will use identical servers when you build the cluster. This will simplify support of the servers, of Windows Server, and of your storage solution (more on that later). However, from the Microsoft perspective, the servers need only be fairly similar.

The processors must be either all-Intel or all-AMD to allow live migration. Hyper-V does allow you to have mixed generations (functionality) of processor from the same manufacturer, but to enable live migration you must disable advanced processor features in the virtual processor settings of each virtual machine.

My advice: Keep it simple. Keep the hosts identical and get the newest processor that you can afford. Doing that will probably mean that the processor will still be available after a year when you need to add host capacity to the cluster.

Management Operating System

Only certain editions of Windows Server supported failover clustering prior to Windows Server 2012 (WS2012):

- Hyper-V Server

- Enterprise edition

- Datacenter edition

Since WS2012, there is no Enterprise edition and the Standard edition can (technically) be used for the management OS of your clustered Hyper-V hosts, in addition to the Datacenter edition and the free Hyper-V Server. Due to the rules of running Windows Server in virtual machines (on any virtualization platform) you will probably use the Datacenter edition. (I am deliberately not getting into licensing in this post!)

Supported Shared Storage

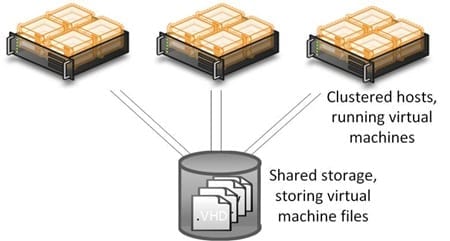

Think about what HA and live migration offer. Live migration allows us to move virtual machines from one host to another. Without shared storage we must move the files of the virtual machine to using shared-nothing live migration. That means moving hundreds of gigabytes or even terabytes of files. That would be incredibly slow, even with Infiniband networking. If a cluster is considered a normal (not a restriction) boundary for live migration, then live migration would be quicker if every host in the cluster shared a common storage system to store the files of the virtual machines.

In the case of HA, if a host goes offline then the remaining hosts need access to the files of the virtual machines that are going to be automatically failed over. That won’t work too well if the files were stored on the D drive of the now dead host! So one of the requirements of a cluster is a shared storage platform that is common to all hosts in the cluster and is supported by failover clustering. The types of storage connectivity that are supported are:

- SAS SAN: Often an economic SAN option that offers excellent performance but with limited numbers of hosts.

- iSCSI SAN: Improved scalability over a SAS SAN with 1 Gbps or 10 Gbps connections from the hosts. Many software-based storage solutions use iSCSI for the connection.

- Fiber Channel SAN: A more expensive SAN option in which the hosts are connected to the storage using expensive but low latency fiber channel cables.

- Fiber Channel over Ethernet (FCoE): FCoE is a hardware convergence solution to enable blade servers to connect to a fiber channel SAN over 10 Gbps ethernet networks that are also used for host and cluster networking.

- PCI RAID: This option is relatively new, allowing two node clusters to connect to relatively cheap shared storage.

- Storage Spaces: You can use this Windows Server feature in conjunction with a supported JBOD (just a bunch of disks) as the shared storage system in a WS2012 or later failover cluster.

- SMB 3.0: You can connect your hosts to shared folders via this flexible and highly performing protocol. The shares could be on a file server, or on a scale-out file server cluster (using any of the cluster-supported storage systems).

A cluster aims to provide HA so it makes sense to build HA into the storage system. Deploy two connections between each host and the cluster storage. (Consult your storage manufacturer for guidance – in fact, that’s the common answer for many cluster design questions!)

Clustered Hyper-V hosts with shared storage.

Networking

A clustered host will require a number of networks. Note that I have deliberately not said “a number of network connections.” That’s because we can converge networks into a high capacity NIC or collection of NICs:

- Management: Control the management OS

- Virtual switch: Use to allow virtual machines to access the physical network

- 2 x cluster networks: Used for cluster communications (including the heartbeat), live migration, and redirected IO

In addition to this you might need two NICs for iSCSI (with MPIO), backup, or more if your design mandates it.

Please read my previous posts on converged networking to understand what your options are when it comes to designing the networks of a Hyper-V cluster.

Support

Microsoft will support your design if it passes (including a warning result) the tests of the Cluster Validation Wizard in failover cluster manager. This is not enough for a reliable real-world design. Make sure that your hardware manufacturer will be happy (this is particularly pertinent for those of you using a SAN). And be sure to get the latest drivers and firmware for everything from the manufacturers; the drivers that come with Windows Server are stable enough only for getting the drivers that you really need.