The most common problem I hear of in the Hyper-V world is related to networking. Complaints such as “my virtual machine is losing network connectivity” or “my host crashes when I do X on the network.” In this post, I’m going to list a number of fixes that will help.

Use Logo Tested Adapters

If your NIC is not on the hardware compatibility list for your Hyper-V version, such as Windows 8.1 or Windows Server 2012 R2, then none of my fixes will help. You took a risk because you went and used a NIC that Microsoft hasn’t tested, so now you are paying for it.

Firmware and Drivers

The number one fix for all problems is one that should not occur. The first thing I do when building a new physical server, such as a Hyper-V host, is run the manufacturer’s update tool. This will download and install the latest firmware and drivers for that server. But if you wander on the TechNet forums, then you’ll find there’s only a few that seem to do this essential step.

I don’t care how new the server is. I don’t care if you believe that the server is up to date. And I don’t care if you think that Dell, HP, or anyone else should have or might have updated the server before it shipped. They didn’t. Go to the support page for your host model, download the updating tool, and install all of the latest drivers and firmware.

A word of warning: do not download drivers or firmware for network cards or anything else in your server if it was supplied by the server manufacturer. For example, you can brick a HP blade by installing Emulex updates that were downloaded directly from Emulex. Manufacturers often have custom chipsets and therefore have custom drivers and firmware, so you should always get these updates and not the more general ones.

Disable VMQ

VMQ is a networking hardware offload that improves the processing of inbound packets to the virtual switch. It is a hugely beneficial feature for 10 Gbps or faster NICs. Unfortunately, the manufacturers of some 1 GbE NICs (I’m looking at you, Broadcom) enable VMQ by default, and it causes problems. Disable VMQ on those 1GbE NICs because (a) it’s probably doing nothing for you, and (b) the NIC is causing problems.

For those of you struggling with Emulex converged networking NICs, I hear you. Emulex were horrendously slow at responding to issues. They finally released an update, but some server manufacturers have been even slower at releasing updated firmware and drivers. The temporary workaround is to disable VMQ, which will bottleneck your inbound traffic on 10 Gbps or faster networking.

Update the Operating System

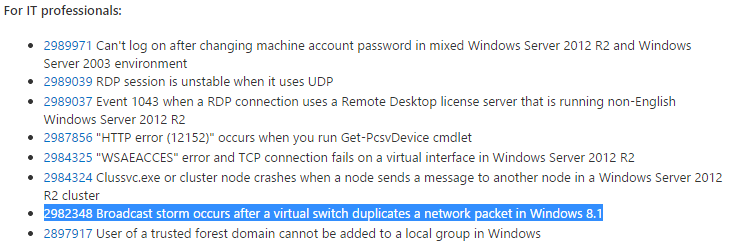

Microsoft is constantly issuing fixes for issues that they discover. Make sure that you approve and install any outstanding updates for the host’s management OS and the guest OS of your virtual machines.

NIC Teaming

I normally prefer and Microsoft normally recommends the Switch Independent connection mode for Windows Server NIC teaming. But there are scenarios, related to physical switching, where another mode, such as LACP, might be better. If you experience a situation where a Switch independent team doesn’t detect a dead port (it won’t), then switch to LACP. This will require a switch that supports and enabled for LACP teaming.

Wi-Fi Networking

Having client Hyper-V built into Windows 8 and Windows 8.1 (Pro and Enterprise editions) is really useful for anyone that wants a convenient and portable lab. But it really does stress Hyper-V networking because it is working with a much wider variety of drivers and firmware. That’s why we see lots of issues with networking in Client Hyper-V. The most common issues are related to Wi-Fi networking.

Ben Armstrong, one of the Microsoft program managers behind Hyper-V, wrote a post recently that explains how Wi-Fi networking works with the Hyper-V virtual switch and why certain things don’t work.

Firmware and Drivers

If you attend a session that I present, then you will hear me repeat this over and again: update your firmware and drivers. Yes, I said it before, and I’ll say it again: update your drivers and firmware.