PowerApps Enhanced with Artificial Intelligence Gain New Capabilities

Have you ever wanted to extract information from business cards in one of your apps with a photo? My customers have.

Have you ever wanted to extra information from paper forms with a quick picture? My customers have.

Have you ever wanted to count objects, aka take inventory, by taking a picture? My customers have.

Well, folks if you found yourself saying yes to those questions it is the best day ever!

During the keynote today at Microsoft Business Application Summit (MBAS) they announced all of this and more. For a while, now Azure cognitive services and other components have had a lot of these capabilities available but it too a pro developer and a lot of work to leverage many of them. The idea of sending your images to an API, waiting on it to process that image, and then getting the results back in JSON was just tough if you weren’t a coder. And that was just for something simple like a business card.

The really cool kids wanted to extract information from forms or count the number of objects in the picture. This is the type of stuff that our brains just do automatically but required the training of AI models to leverage in a compute scenario. The PowerApps team decided that was too hard. So they turned it into functionality available right inside the PowerApps studio.

Scanning business cards

This functionality just works. You add the control Business Card Reader to your app and you are business. When you click on the control it asks you to choose or take a picture (this means it works on PCs, Tablets, or Mobiles) and then it processes away. All of the values are then presented as properties of the control. So the syntax BusinessCardReader1.Email spits out the email address. No special work required. I literally had the business card scanner added to my app within 2 minutes of logging in. Add this one today and blow your co-workers minds. No licensing, no nothing.

Object Detector

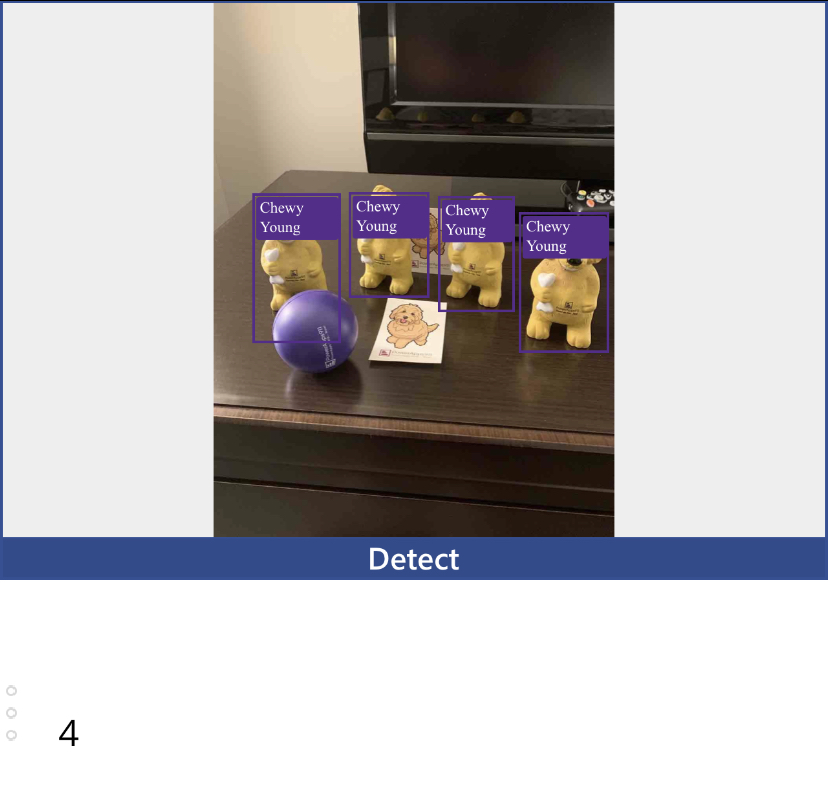

I had some fun with this one. To celebrate being at MBAS we had some Chewy stress toys made. They are super cool and I have a bag of them. (If you are here and want one just mention this article.) So I set out to see how hard it would be to get PowerApps to count them. Turns out it was pretty easy. First thing I had to do was train a model. Sounds scary. What it really means is I had to take 15 or more pictures of the dogs. I then uploaded the pictures and drew a rectangle around each one. My hotel room was like a New York runway with all of the pics. After a few minutes of processing, I actually got a model score of 100% which seemed pretty good.

Now an interesting part is that the data I was tagging, in this case, Chewy Young, has to come out of CDS. You can’t provide other data sources like SharePoint or SQL. This wasn’t a problem for me but might slow you down a bit. That is okay, remember you can sign up for a free P2 trial to check this out. This is definitely a premium feature worth the premium license. This is going to change how your business does work. My mind is blown.

Also when you are training you can train more than one item at a time. So if I had of mixed in pictures of the Shane bobblehead I could have trained both at the same.

With my model done I was then able to add the Object Detector to my app. To get data out of that tool it is a little trickier. Why? Because it has a table of data. When you show it an image you might have both Shane and Chewy in the image. So the formula in a text box might look something like First(ObjectDetector2.VisionObjects).count in my case since I only had one object trained. If I had more I would have used LookUp to query the number of Chewys vs. Shanes. Here is an image of the app I built as fast as humanly possible counting Chewys.

As you can see I threw in some random stuff and it still counted I had four Chewys. I am so impressed I can’t stand it. I also cannot imagine the apps you are going to create with this and share with the world.

Other Tools

In addition to those two awesome tools that I am looking forward to rolling out immediately, there is also Binary Classification for doing predictive analysis, Form Processing for reading paper (hello invoice scanner), and Text Classification to help tag snippets of text. I haven’t played with any of these yet but I will soon.

Summing it up

This was a good day for the PowerApps world. We got some major new capabilities that are just not even imaginable on other platforms. Do keep in mind these features are still in Preview so there could be changes before the go GA but I can tell you I have customers that will be rolling them out within days of this announcement. It is too cool not to start digging in. Also, if you want a more hands-on walkthrough of using these tools it will be on my PowerApps YouTube channel soon. So subscribe and look forward to lots of fun AI demos and tutorials as I nerd out.