Today I’ll walk you through the basics of Hyper-V Network Virtualization (HNV), including how multitenant computing works and its challenges. I’ll also go into the concepts of how HNV is implemented in Windows Server 2012, Windows Server 2012 R2, and System Center.

Challenges of Multitenant Computing

Much of what Microsoft has done with Hyper-V and System Center in the 2012 and 2012 R2 generations was based on their own development and experiences in Windows Azure, as well as the feedback that was gathered from hosting companies. A key trait of a cloud is multitenancy, in which multiple customers of the cloud (known as “tenants”), rent space in the cloud and expect to be isolated from each other. Imagine Ford and General Motors both wanting to use the services of same public cloud. They must be isolated. In doing so, the cloud operator (the hosting company) must ensure that:

- Customers cannot communicate with each other: This is not only to prevent data leakage but also to prevent deliberate (corporate espionage) or accidental attack (via infection).

- Hosting companies cannot trust their tenants: Some customers do very dumb things, like, oh, opening TCP 1433 to the world and/or using “monkey” as their root/Administrator password. A hosting company cannot let a successful attack on a tenant compromise the hosting infrastructure and all of the other tenants along with it.

As Microsoft found out, achieving this level of isolation with traditional solutions isn’t easy. How is this done with physical networking? The answer is probably to have one or more virtual local area networks (VLANs) per tenant, with physical firewall routing/filtering to isolate the VLANs. However, this is not VLANs’ intended purpose. They were meant to provide a method to subnet IP ranges on a single LAN and control over broadcast domains.

Somehow, the poor network engineer was convinced that VLANs should be twisted into a security solution. Doing this simply was not scalable for the following reasons.

- Networks are limited to 4096 VLANs. That might sound like a lot, but if a tenant has two, three, four, or more VLANs then that number does not scale in the cut-throat hosting world where margins are small and volume is the key to profitability and sustainability.

- Maintaining the stability and security of firewalled VLANs at this scale is not a task for the timid… or the bold, as it happens.

- This is hardware defined networking. Deploying a new tenant requires engineering, and that prevents the essential cloud trait of self service – as well as a hosting company’s desire to rapidly sell and provision tenants via online means with as little costly human engineering as possible (remember that margins are small so human hours are expensive).

Software Defined Networking (SDN)

Microsoft announced a solution to the limits of VLANs in the cloud using a new feature that was codeveloped for Windows Server 2012 Hyper-V and Windows Azure. This new feature was called Hyper-V Network Virtualization (HNV). This is based on a more general concept called Software Defined Networking (SDN).

The goal of SDN is to virtualize the network. That will, and should, invoke your responding, “What the <insert expletive of choice>?” Calm down. Take a step back. Think back on the computer room of yore, full of physical servers that took up rack space and electricity. What did we do with them? We turned them into software-defined machines. Yes, we virtualized them. Now they exist only as software representations. And from then on, we (normally) only ever deploy virtual machines whenever someone asks for a “server.” That’s what SDN does: It takes a request for a “network” and deploys a virtual network. That’s a network that doesn’t exist in the physical realm (top-of-rack switches, core switches, etc.) and replaces it with something that exists in the hypervisor.

The core advantages of adopting HNV over VLANs are:

- Virtual networks are software defined: This makes HNV appropriate for enabling self-service tenant on-boarding (getting tenants to register, deploy services, and eventually pay) without using human engineering to deploy VLANs

- Scalability: Virtual networks scale way beyond the tiny quantity limits of VLANs.

- Simplicity: This is tied to scalability. There is no need to configure complicated firewall rules to isolate tenants from each other and the hosting company. This isolation is there by default.

- IP Address Mobility: The IP address that the tenant uses is irrelevant because it is isolated. In fact, tenants can use overlapping IP addresses.

This are just the basic benefits of HNV. There is more, as you will see later.

Basic Concepts of Hyper-V Network Virtualization

SDN and HNV abstract IP address spaces. This is done using two types of address:

- Consumer Address (CA): This is the IP address that the tenant uses in their virtual network. This address is set in the guest OS of the virtual machine as normal; it’s the only address that the tenant is normally aware of.

- Provider Address (PA): This is the address that is assigned to the NIC of the virtual switch network to allow virtual machines to communicate at the physical layer.

HNV actually has two ways of working.

- IP Address Rewrite: For every CA assigned to a VM, there is a PA assigned to the host. This HNV method is just not scalable because a large cloud will run out of IP addresses, and there is a need to track IP usage at a huge level in a public cloud.

- Network Virtualization using Generic Routing Encapsulation (NVGRE): With this method, a single PA is assigned to the host and VM network packets are encapsulated. The industry agrees that this method is the way forward, and so Microsoft focused on it with System Center 2012 SP1 and System Center 2012 R2.

A Basic Example of NVGRE.

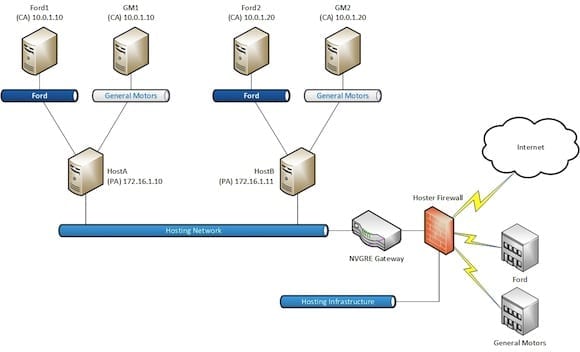

The diagram above illustrates how two tenants can reside on the same cloud and virtual machines on the same virtual network can communicate using NVGRE. In this example, note that a single virtual network is created for Ford that spans multiple hosts. The same is true for General Motors. The virtual machine, Ford1, is assigned a CA of 10.0.1.10 and so is GM1. Both virtual machines reside on different virtual networks so there is no issue of overlapping IP addresses; they simply will not route to each other anyway because of tenant network isolation.

If Ford1 needs to communicate with Ford2, the packet from the CA of Ford1 will be encapsulated by HostA. HostA has a lookup table to know where Ford2 is (on HostB). HostA will send the encapsulated packet from Ford1 to HostB, where the packet is stripped and passed to Ford2.

Ford3, Ford4, Ford5, etc., can be deployed on either HostA or HostB. Each will get their own CA (either assigned by the cloud or by the tenant), but there is no need for more than one PA per host thanks to NVGRE. That keeps IP management simpler and more scalable.

Diving a Little Deeper into HNV

It is rare that a tenant wants just one network. The example shown above is very simplistic, and to be honest, it doesn’t accurately represent what HNV provides. It is a basic illustration to present a concept, often shown as Red Corp and Blue Corp during Microsoft presentations. It’s time to go further down the rabbit hole and see what HNV can do.

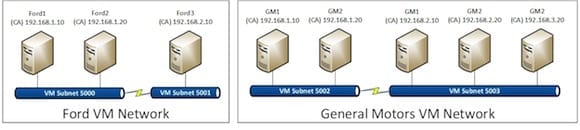

Dividing VM networks into VM subnets.

In reality a tenant often wants multiple subnets in their virtual network. HNV allows this, as you can see in the above diagram. Again, in this example, Ford and General Motors have virtual networks, referred to as VM networks in System Center Virtual Machine Manager (VMM). This provides tenant isolation. Both Ford and General Motors require more than one subnet, so these are provided in the form of VM subnets. These subnets have IDs, IP ranges, and a default gateway, just like a subnet does in the physical network.

- VM subnet ID: HNV supports over 16 million VM subnet IDs. That gives HNV just a little bit of a higher ceiling than 4,096 possible VLANs.

- IP range: Each VM subnet gets a range of addresses, such as 10.0.0.0/15, 172.16.1.0/16, 192.168.1.0/24, or whatever you think is valid. Remember these are abstracted from the physical world, so you use whatever is best for your cloud and/or for your tenant(s). In fact, maybe the tenant even assigns a range during the onboarding process!

- Default gateway: The first IP address (such as 192.168.1.1) is assigned to the default gateway. This allows VMs in one VM subnet to route to VMs in another VM subnet, as long as they are in the same VM network. There is no actual router to configure; this is a function of HNV that just works “auto-magically.”

Now, in the above diagram we can see that Ford and General Motors have both deployed two VM subnets in their own VM Networks. The Ford VM subnets are routed to each other, the General Motor VM subnets are routed to each other, but neither Ford, General Motors, nor the hosting company can route to each other. In the case of General Motors, the VMs in VM subnet 5002 will have a default gateway of 192.168.1.1 and the VM subnet 5003 will have a default gateway of 192.168.2.1. VMs in each VM subnet will use their respective default gateways to communicate with other VM subnets in the same VM network.

What if a tenant wants to isolate VM subnets from each other? In this case, you deploy another VM network, as VM Networks are the unit of isolation. Any VM subnet in a VM Network is routed to all other VM subnets in the VM Network. VM subnets in one VM network are isolated from other VM subnets in other VM networks.

Communicating with the Physical World

It’s all well and good if you have services running on these nicely isolated virtual machines, but there are PCs that will want to connect to them to access SharePoint farms and send or receive emails, and web farms to share to the Internet. How exactly do VMs on abstracted networks communicate with the world via their NVGRE encapsulated networking?

This is made possible using a NVGRE appliance. We have returned to the high-level view of NVGRE and added an NVGRE appliance (sometimes called a gateway) to the following illustration. This appliance allows us to extend the virtualized NVGRE networks to the physical world.

- NAT: Internet facing services, such as e-commerce, are NATed by the appliance. This allows incoming/outgoing packets to/from the Internet to reach designated servers in the VM networks/subnets.

- Routing: Internal BGP (iBGP) is provided by the appliance to allow tenants to integrate their on-premise networks with networks in a hosted private or public cloud. This is known as hybrid networking. Using iBGP provides fault tolerant routing from multiple possible connections in the tenants’ on-premise networks.

- Gateway: The hosting company can route (probably via firewall) onto the VM subnets to provide additional management functionality.

A NVGRE appliance.

The VM Networks are compartmentalized in the NVGRE Gateway to continue the isolation of the VM networks through to the physical layer without any need for using VLANs.

The lack of available NVGRE appliances delayed the adoption of SDN and HNV, but these appliances are starting to appear from companies such as Iron Networks, Huawei, and F5.

The Role of System Center VMM

System Center VMM plays several critical roles in HNV.

- Creation and management: VMM is the engine of change in the Microsoft cloud. It provides the tools for administrators to model Logical Networks and to implement VM networks and their nested VM subnets. Other parts of System Center can reach into VMM once the underlying Logical Network architecture is completed, and provide automated deployment of more VM networks and VM subnets. This enables self-service in the cloud.

- Maintaining lookup tables: A VM network can span many hosts in a data center. When two VMs on different hosts want to communicate, then the host with the sending VM needs to know where to forward the encapsulated NVGRE packets. A lookup table records which VMs are on what hosts. While you could build this table on each host using PowerShell, it will become quickly out of date thanks to new VMs, self-service tenant onboarding, and Live Migration. VMM is the center of all fabric and VM placement knowledge in the Microsoft cloud; it makes sense that it takes on the responsibility of maintaining and updating those NVGRE forwarding tables. A benefit of this functionality is that the subnet is no longer a boundary for VM Live Migration. Instead, large data centers can move VMs to any host subnet as long as the NVGRE appliance and VMM can continue to integrate with and manage the VM and destination host.

- Integration of the NVGRE appliance: VMM will integrate the NVGRE appliance via a provider. This allows VMM to create the necessary compartmentalizing in the appliance and to update the necessary NVGRE lookup tables so the appliance knows what hosts VMs are placed on to correctly forward encapsulated packets.

Improvements in Windows Server and System Center 2012 R2

There are some significant feature improvements/introductions that we will highlight in WSSC 2012 R2.

- Windows Server 2012 R2 NGVRE appliance: WS2012 RRAS includes the ability to function as an NVGRE appliance. This means that you can deploy WS2012 R2 virtual machine(s) as NVGRE appliance(s) on a dedicated host(s) in an edge network instead of purchasing expensive third-party solutions. This is huge news because it makes SDN more affordable. Note that third-party solutions will offer additional benefits, including F5, in which the appliance will integrate with their BIG-IP load balancer for added intelligence of service optimization.

- HNV and Virtual Switch extensibility are compatible: HNV lived outside of the virtual switch in Windows Server 2012, and that meant that its traffic lived outside of the scope of any added virtual switch extension. In WS2012 R2, all HNV packets that pass through the virtual switch are subject to any inspection and/or management that is added in the form of a virtual switch extension.

- DHCP can be used for CA assignment: Tenants can use DHCP to assign CAs to their VMs on WS2012 R2 Hyper-V hosts. This is because HNV can learn the CAs assigned to VMs.

- Improved IPAM integration: WS2012 R2 IP Address Management integrates with VMM 2012 R2 and provides better network design and IP address management in the CA space.

- Diagnostics: Cloud operators can test VM connectivity without logging into a tenant’s VM

Further Learning

Damian Flynn (MVP, Cloud, and Datacenter Management) and Nigel Cain (Microsoft) are writing a series of articles to explain the concepts of HNV and how to design and implement a solution using System Center. This series is expanding over time and is essential reading for anyone want to learn about the intricacies of HNV. There is a steep learning curve, but it is one worth climbing.

At TechEd 2013, Greg Cusanza (Microsoft) and Charlie Wen (Microsoft) presented two sessions (MDC-B350 and MDC-B351) that is must-watch content if you are just reviewing the concepts of HNV. In it, they explain how HNV works and how it can be designed and implemented, and they go over the new features of Windows Server and System Center 2012 R2.