Deploying QoS Packet Scheduler in Windows Server 2012

In this post we will show you how to deploy bandwidth management via Quality of Service (QoS) Packet Scheduler in Windows Server 2012 (WS2012). This type of QoS can be used when converging the networks of a Hyper-V host’s management OS or a non-Hyper-V WS2012 or later operating system.

What Is QoS Packet Scheduler?

This method of QoS can be used when you are deal with networking that is not passing through a Hyper-V virtual switch in the configured operating system. This means that you can use this type of bandwidth management to guarantee minimum levels of bandwidth for protocols (not NICs) in:

- Physical NICs in a Hyper-V host that are used only for host networking

- Physical servers that have nothing to do with Hyper-V

- Within the guest OS of virtual machines

As noted, this form of QoS works by assigning a weight or absolute bits per second rate to a protocol, such as live migration or cluster communications. This ensures that the protocol in question will get a minimum level of bandwidth on a contended NIC. The protocol can burst beyond that guarantee when there is both demand and idle capacity.

QoS rules that are enforced by the Packet Scheduler have two traits that you should be aware of:

- This form of bandwidth management is implemented by the processor and will have scalability limitations in truly huge workloads

- The QoS Packet Scheduler cannot enforce QoS on traffic that it cannot see such as Remote Direct Memory Access (RDMA) which is used by SMB Direct.

If you need every cycle of processor capacity (which is normally underutilized) for your guests’ services and you want to use converged RDMA then you should use Datacenter Bridging (DCB) to implement QoS at the hardware layer instead. This will require DCB capable NICs and switches.

Example of QoS Enforced by the Packet Scheduler

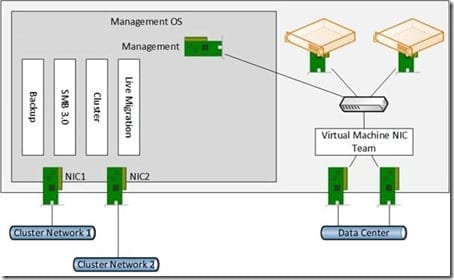

In this design we will be converging several networks on WS2012, as shown below.

- Backup: A backup product that uses TCP 10000 to communicate with agents on the host is being used.

- SMB 3.0: SMB 3.0 will be used as the storage protocol to store virtual machines on a Scale-Out File Server (SOFS).

- Cluster: This is the cluster communications network that is responsible for the heartbeat.

- Live Migration: The host is WS2012 Hyper-V so traditional TCP/IP Live Migration is being used.

A possible WS2012 converged networks design.

Two physical NICs are being used for the host networking. Try not to overthink this – that’s a common mistake for novices to this networking. The design is quite simple:

- 2 NICs, each on a different VLAN/subnet

- Each NIC has a single IP address

- The NICs are not teamed

This configuration is a requirement for SMB Multichannel communications to a cluster such as a SOFS. Each NIC has a single IP address. Critical services such as cluster heartbeat, live migration, and SMB Multichannel will failover the non-teamed NICs automatically if you configured correctly. For example, enable both NICs for Live Migration in your host/cluster settings.

Just as with QoS that is enforced by the virtual switch, your total assigned weight should not exceed 100. This makes the algorithm work more effectively and makes your design easier to understand; weights can then be viewed as percentages. We will assign weights as follows on this host with 10 GbE networking:

- Backup: 20

- SMB 3.0: 40

- Cluster: 10

- Live Migration: 30

Implementing QoS Enforced by the Packet Scheduler

PowerShell will be used to create the QoS rules. The cmdlet used is New-NetQoSPolicy.

New-NetQosPolicy “Live Migration” –LiveMigration –MinBandwidthWeight 30

The above example uses the built in filter called LiveMigration to create a rule called “live migration.” Also, a minimum weight of 30 is assigned to Live Migration traffic. This will be applies all NICs that the OS Packet Scheduler is responsible for which are the physical NICs that are not connected to the virtual switch.

You can extend this cmdlet to assign an IEEE P802.1p priority that can be leveraged by your physical network admins.

New-NetQosPolicy “Live Migration” –LiveMigration –MinBandwidthWeight 30 –Priority 5

Note that some switches with out of date software have had trouble with packets that are tagged with the priority flag.

After that you can implement QoS for SMB traffic:

New-NetQosPolicy “SMB” –SMB –MinBandwidthWeight 40

Other protocols won’t have a built-in filter. In those cases we can use the configuration of the protocol to define the traffic that is being managed. This first example will implement QoS for cluster communications by defining the destination IP port of the protocol in question:

New-NetQosPolicy “Cluster”-IPDstPort 3343 –MinBandwidthWeight 10

We can use this example to make a rule for our TCP 10000 backup protocol:

New-NetQosPolicy “Backup”-IPDstPort 10000 –MinBandwidthWeight 20

There are lots more mays to identify the traffic that is being managed by the OS Packet Scheduler, and you can find more information about these on TechNet.

And that’s it! Four lines of PowerShell have configured QoS for four protocols that are used by the host’s management OS.