Creating Advanced Functions in PowerShell

- Blog

- PowerShell

- Post

In a previous article, we started looking at the process of moving from a few lines of PowerShell commands to a re-usable PowerShell function. If you missed that article, take a few minutes to get caught up. The last version of the Get-MyUptime function should serve as a model for the minimum level of scripting complexity. It works, writes objects to the pipeline and is re-usable. Once you understand how all of the pieces work, you’ll eventually realize what you need to take it to the next level.

Creating an Advanced PowerShell Function

If you’ve been using PowerShell for a while you’ll realize there are some limitations. There’s no error handling. What if the user of my PowerShell function tries to get uptime for a server that isn’t online or that they don’t have permission to access? What if you want to pipe in a list of computer names say from a text file? What if I need to troubleshoot or debug the function? These are some of the issues that can be addressed by creating what we refer to as an advanced PowerShell function. Here’s my version.

Function Get-MyUptime {

[cmdletbinding()]

Param(

[Parameter(Position=0,ValueFromPipeline,ValueFromPipelineByPropertyName)]

[ValidateNotNullorEmpty()]

[Alias("cn","host")]

[string[]]$Computername = $env:Computername

)

Begin {

Write-Verbose -Message "Starting $($MyInvocation.Mycommand)"

} #begin

Process {

Foreach ($computer in $computername) {

Write-Verbose "Getting uptime from $($computer.toupper())"

Try {

$Reboot = Get-CimInstance Win32_OperatingSystem -ComputerName $computer -ErrorAction Stop | Select-Object CSName,LastBootUpTime

}

Catch {

Write-Error $_

}

if ($Reboot) {

Write-Verbose "Calculating timespan from $($reboot.LastBootUpTime)"

#create a timespan object and pipe to Select-Object

New-TimeSpan -Start $reboot.LastBootUpTime -End (Get-Date) |

Select-Object @{Name = "Computername"; Expression = {$Reboot.CSName}},

@{Name = "LastRebootTime"; Expression = {$Reboot.LastBootUpTime}},Days,Hours,Minutes,Seconds

#reset variable so it doesn't accidentally get re-used, especially when using the ISE

Remove-Variable -Name Reboot

}

} #foreach

} #process

End {

Write-Verbose -Message "Ending $($MyInvocation.Mycommand)"

} #end

} #end function

Let’s go through some of the changes. First, notice that I’m using something called cmdlet binding.

[cmdletbinding()]

When PowerShell sees that it knows to treat your function like a cmdlet which means you get all of the common cmdlet parameters like –Verbose and –Outvariable. Personally, having automatic support for –Verbose is the most important reason to use this. With it, I can insert as many Write-Verbose commands in my function. I include these commands from the very beginning. When I run the function normally, the messages don’t appear. But with cmdlet binding I can use the – Verbose parameter and then the messages will appear. I don’t have to code anything other than [cmdletbinding()] and my Write- Verbose commands. I use these command to trace what the function is doing and the values of key variables.

I still have a single parameter, Computername that takes an array of strings.

[string[]]$Computername = $env:Computername

But I’ve decorated the parameter with some additional features. I defined a parameter alias.

[Alias("cn","host")]

With these aliases I can run my command in any of these ways:

get-myuptime -Computername chi-dc04

get-myuptime –cn chi-dc04

get-myuptime -host chi-dc04

This is purely optional, but can make your command easier to use. I also added a validation test.

[ValidateNotNullorEmpty()]

This ensures that the value specified for –Computername isn’t null or empty. There are all types of validation tests you can apply. I use this one all the time.

Finally, I define some parameter attributes.

[Parameter(Position=0,ValueFromPipeline,ValueFromPipelineByPropertyName)]

I only have one parameter so I don’t really need to define a position for it. But if I had several parameters and I wanted some of them to be positional, I can control the order with Position=X. The next two settings could also be written like this:

…ValueFromPipeline = $True,ValueFromPipelineByPropertyName=$True)

The first entry, ValueFromPipeline, tells PowerShell that whatever value you see coming into this command, assign it to this parameter. This allows commands like this:

"chi-dc04" | get-myuptime

You can only have one parameter set to accept value from pipeline otherwise PowerShell won’t know what parameter to use. I’ve also told PowerShell to use pipeline binding by property name for this parameter. If PowerShell sees an incoming object with a Computername property, in other words the same name as the parameter, then it will assign the value to the parameter. This also affects any aliases you have for the parameter as well. Now I can import say a CSV file that has a computername property to my command.

Import-csv computers.csv | get-myuptime

However, for this type of pipelined expression to work, you need to design your function with three distinct scriptbblocks. If you look at my example you will see there are scriptblocks called Begin, Process and End. The Begin and End scriptblocks are optional. You only really need a Process scriptblock. In the Begin scriptblock insert any PowerShell commands that will run before any pipelined input is processed. Don’t reference a parameter that might get input from the pipeline. In the End scriptblock insert any PowerShell commands that will run after pipeline input is processed. The code in the Process scriptblock will run for each pipelined object.

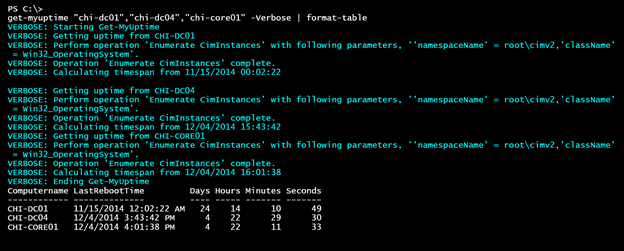

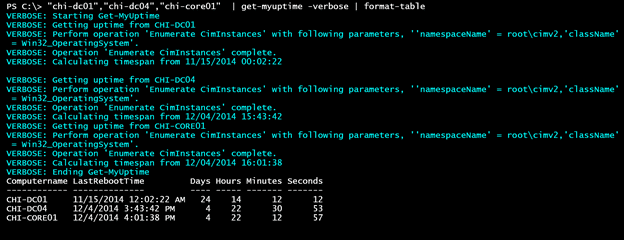

When you are designing a PowerShell function, you have to think about who will be using it and how. In this situation, I wanted to be able to handle either of these use cases.

get-myuptime "chi-dc01","chi-dc04","chi-core01" | format-table

"chi-dc01","chi-dc04","chi-core01" | get-myuptime | format-table

The first example requires the ForEach enumerator

The second requires the Process scriptblock.

The last major change is the inclusion of error handling using Try/Catch.

Try {

$Reboot = Get-CimInstance Win32_OperatingSystem -ComputerName $computer -ErrorAction Stop | Select-Object CSName,LastBootUpTime

}

Catch {

Write-Error $_

}

if ($Reboot) {

Write-Verbose "Calculating timespan from $($reboot.LastBootUpTime)"

...

The important thing to remember is that you limit the number of commands in a Try block because any errors will be caught in the accompanying Catch block. But in order for that to happen you have to make sure that any exceptions become terminating exceptions by setting the common ErrorAction preference to Stop. In my function, if there is an error, the Catch block will simply write it to the error pipeline. There are things you can do using Break and Return but they get complicated depending on how your command is being run. Instead, I use a simple If statement to decide if the function should continue. If Get-CimInstance ran without error, then $Reboot will have a value and be True. If the command failed, $Reboot won’t be defined so nothing in the If statement will run.

Be careful when developing and testing your PowerShell scripts. I always test the command in the PowerShell console and NOT the ISE. The reason being is that everything in the ISE runs in a single global scope so you can accidentally be referencing variables left over from a previous attempt. When you look at my If statement you’ll see that I am explicitly removing $Reboot.

Remove-Variable -Name Reboot

Otherwise, when I run the command in the ISE and hit a computername that causes an error, $Reboot might still exist from the previous computer and that’s not what I want.

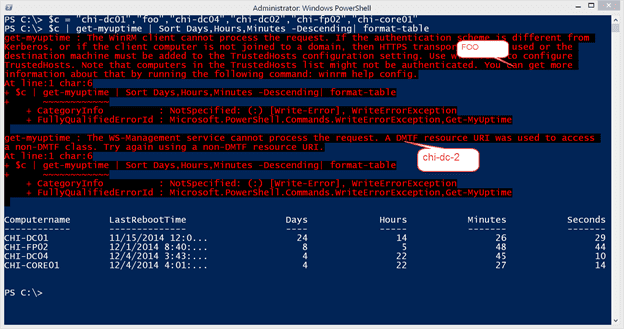

The end result is something much more effective:

$c = "chi-dc01","foo","chi-dc04","chi-dc02","chi-fp02","chi-core01"

$c | get-myuptime | Sort Days,Hours,Minutes -Descending| format-table

I’ve inserted a bogus computer name and one for a server that is running PowerShell 2.0 so you can see the error handling at work.

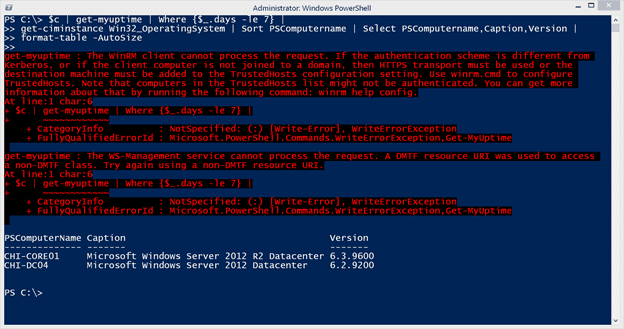

And because I am writing an object to the pipeline, if I plan ahead and can write something that can be integrated with other PowerShell commands. If you were paying attention may also have noticed that I changed the property on my output to be Computername.

That’s because many cmdlets use that parameter name and often take pipeline binding by property name. What that means for me is that I can use my new command to do more than simply return uptime.

$c | get-myuptime | Where {$_.days -le 7} |

get-ciminstance Win32_OperatingSystem | Sort PSComputername | Select PSComputername,Caption,Version |

format-table -AutoSize

From my list of servers I want to find all computers rebooted in the last 7 days and display a report showing the operating system version information, formatted as a nice table.

I hope you’ve been enjoying the journey from simple commands to re-usable tool. There’s much more to our journey but I think we’ll rest for now. When we resume, we’ll continue down the path of PowerShell tool development.